Yea, recently I set up immich and while it’s solving a way more narrow (image and video library) problem it’s so much more responsive and felt much more “put together”.

Yea, recently I set up immich and while it’s solving a way more narrow (image and video library) problem it’s so much more responsive and felt much more “put together”.

I thought that the last state of the art opinion was that there is no reliable difference between the brains of the sexes? I am by no means informed on this.

Could that mean that at some point one could detect transgender people using medical testing?

Hmmm I never had a problem finding what I want with public sources. Maybe my tastes in media are not refined enough.

There is no incentive but I also seed everything I download until at least ratio 2 but mostly without a cap especially obscure stuff.

I also like to not even use public trackers instead relying solely on the mighty distributed hash table (DHT) to find peers.

I never understood why people prefer private trackers.

The hero we need

Hmmm. Maybe I should try that then. Never actually understood why people like these managers as I was always satisfied with the directory tree for organization.

Well maybe besides music. There beets fucking rocks. But in the end I use it also only to sort music into a directory structure.

What’s wrong with just folders and file names?

Aww, the poor turtle is trying their very best.

Unpopular opinion but proton works better than native games in many cases. Tbf I don’t know about these titles in particular.

I would love it if devs would target proton / wine as a platform. Using familiar (to them probably) interfaces while optimizing for the use with proton, e.g. using Vulkan instead of DirectX.

I think that is actually happening with devs targeting the steam deck

The only way to make Rust segfault is by performing unsafe operations.

Challange accepted. The following Rust code technically segfaults:

fn stackover(a : i64) -> i64 {

return stackover(a);

}

fn main() {

println!("{}", stackover(100));

}

A stack overflow is technically a segmentation violation. At least on linux the program recives the SIGSEGV signal.

This compiles and I am no rust dev but this does not use unsafe code, right?

While the compiler shows a warning, the error message the program prints when run is not very helpfull IMHO:

thread 'main' has overflowed its stack

fatal runtime error: stack overflow

[1] 45211 IOT instruction (core dumped) ../target/debug/rust

Edit: Even the compiler warning can be tricked by making it do recusion in pairs:

fn stackover_a(a : i64) -> i64 {

return stackover_b(a);

}

fn stackover_b(a : i64) -> i64 {

return stackover_a(a);

}

fn main() {

println!("{}", stackover_a(100));

}

Yes, this maximal decentralized usage where everybody has their own copy but can collaborate and pick and choose from other copies was a central idea in the creation of git. Ultimately it was made for Linux Kernel development and that is how that works over there.

You do not even need to use git specific protocols. One can simply import patch sets and mail them to each other.

Git was made to work decentralized and repositories are trivial to mirror.

Btw I didn’t down vote you.

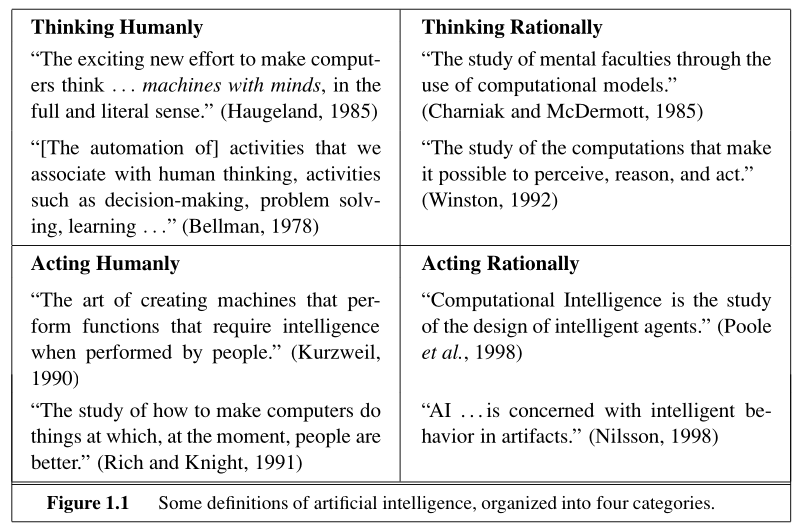

Your reply begs the question which definition of AI you are using.

The above is from Russells and Norvigs “Artificial Intelligence: A Modern Approach” 3rd edition.

I would argue that from these 8 definitions 6 apply to modern deep learning stuff. Only the category titled “Thinking Humanly” would agree with you but I personally think that these seem to be self defeating, i.e. defining AI in a way that is so dependent on humans that a machine never could have AI, which would make the word meaningless.

What algorithm are you referring to?

The fundamental idea to use matrix multiplication plus a non linear function, the idea of deep learning i.e. back propagating derivatives and the idea of gradient descent in general, may not have changed but the actual algorithms sure have.

For example, the transformer architecture (that is utilized by most modern models) based on multi headed self attention, optimizers like adamw, the whole idea of diffusion for image generation are I would say quite disruptive.

Another point is that generative ai was always belittled in the research community, until like 2015 (subjective feeling would need meta study to confirm). The focus was mostly on classification something not much talked about today in comparison.

That was such a culture shock when I went to the us for the first time.

In Germany and many places in Europe do not think of burgers as sandwiches. I was so confused when I ordered a sandwich and got something like a burger.

I expected something like this. My confusion must’ve been quite the sight, the waitress even seemed concerned. Tasted great though.

Numpy can use BLAS packages that are partly written in Fortran

In assessing risk assume everyone is a bumbling idiot. For we all have moments of great stupidity.

There is definitely a risk in changing it. Many automation systems that assume there is a master branch needed to be changed. Something that’s trivial yes but changing a perfectly running system is always a potential risk.

Also stuff like tutorials and documentation become outdated.

Why are Americans so riled up about this?

Do you guys not realize that this reaction is literally one of the reasons they do this? To be a distraction and to show their base that they made the bad people™ cry about it.

They had the same problem when it was relatively more popular.